Table of Contents

Cyber-Risk Analysis and Quantification

This research line at TU/e SEC is led by Dr. Luca Allodi and is focussed on quantitative aspects of cybersecurity risk assessment. This line of research is also informed by results from the attacker economics research track.

Vulnerability remediation

An important aspect of IT threat management is vulnerability management; the rationale is clear: as vulnerabilities allow attackers to perform actions on the system through exploits and malware, fixing vulnerabilities is a priority in any IT risk management policy.

However, fixing vulnerabilities means modifying existing software. This is generally undesirable as modifications may, apart from fixing the vulnerability:

- break specific software functionalities (e.g. interaction with other system components or software) that affect the business process;

- have expensive consequences such as system downtimes or require significant personnel time;

- introduce new vulnerabilities.

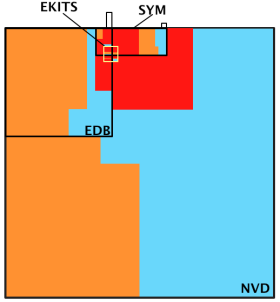

The IT response to that is, in general: “any severe-enough vulnerability must be fixed”. The industry standard to measure vulnerability severity is the Common Vulnerability Scoring System (CVSS) by NIST, but this is known to be uncorrelated with actual exploits (Allodi et al. TISSEC 2014). The Figure on the side  depicts a Venn Diagram distribution of vulnerabilities (NVD), vulnerabilities with a Proof-of-Concept exploit (EDB), vulnerabilities in the black markets (EKITS), and vulnerabilities exploited at scale (SYM). Areas are proportional to number of vulnerabilities, and color mappings match High (red), Medium (yellow), Low (blue) CVSS severities (NVD). As one can see, CVSS severities do not appear to be good predictors for probability of inclusion in SYM, an insight that remains valid also after more formally controlling for possible

confounding factors (Allodi et al. TISSEC 2014).

depicts a Venn Diagram distribution of vulnerabilities (NVD), vulnerabilities with a Proof-of-Concept exploit (EDB), vulnerabilities in the black markets (EKITS), and vulnerabilities exploited at scale (SYM). Areas are proportional to number of vulnerabilities, and color mappings match High (red), Medium (yellow), Low (blue) CVSS severities (NVD). As one can see, CVSS severities do not appear to be good predictors for probability of inclusion in SYM, an insight that remains valid also after more formally controlling for possible

confounding factors (Allodi et al. TISSEC 2014).

This led to poor vulnerability management practices whereby vulnerability patching work is overwhelmed by the huge number of patches to install, that cannot however be straightforwardly applied because of the concerns outlined above.

This reflects the general concern that attackers may get in through any available path, i.e. may exploit any vulnerability anytime. Policy considerations on this can be found in this article.

Attacker environment and risk of attack

Attack types

Cyber-attacks can be roughly classified in two categories:

- Targeted cyber-attacks: these attacks target specific systems and organizations and are typically carried by sophisticated, technically advanced attackers. These may be nation-state agencies as well as resourceful enterprises (e.g. for espionage purposes). These attack may be carried by means of the so-called “0-day” attacks, i.e. exploits that attack a vulnerability that is unknown to the defender (e.g. because the attacker discovered it). These attacks are very rare. A 2012 study revealed that only a small fraction of overall attacks involve 0-days (Bilge et al. CCS 2012).

- Untargeted cyber-attacks: these attacks are launched against the population of Internet users at large. Vulnerable targets end up being infected, whereas non-vulnerable targets remain unaffected. The attacker does not target specific systems or users, but rather a class of users with certain characteristics . These attacks are by far the most common and exploit well-known vulnerabilities. For example, the recent WannaCry malware exploited a long-patched vulnerability and affected millions of users worldwide without targeting any specific organization (for example, the UK NHS has been a victim of the malware not because of attacker interest in its systems, but because of its reliance to old software configurations.

Factors of risk: attacker economics

Whereas targeted attack scenarios vary on a case-by-case basis, untargeted attacks can be more generally characterized. Due to the relevance of the latter on the overall threat scenario, in the remainder we focus on this.

Untargeted attacks are used to achieve a number of impacts on victim systems; for example, installing banking Trojans; stealing credentials, credit card numbers, and other private information; using infected systems to mine bitcoins or other virtual currency; launching denial of service attacks through botnets; etc.

This lead to the commodification of cyber-attackers whereby attackers can buy attack technology and products of attacks (e.g. spam services) from other attackers.

While this obviously simplifies the attack process for the attacker (who does not need to be technically sophisticated to engineer all attacks from scratch), introduces limitations in the likely sources of attack as these will coincide with the market’s offering/portfolio. More details on this are given at this page.

Quantitative Risk Models

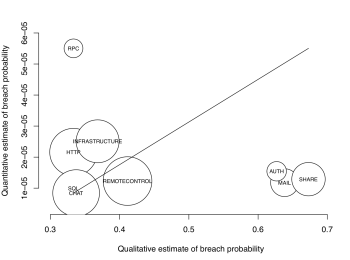

We propose to exploit this core idea to more precisely quantify risk of exploit (Allodi et al. 2013 IWCC), as well as building reproducible, objective, and scientific risk quantification models (Allodi et al RISA 2017). The bubble plot on the left  shows the disparity between a traditional, matrix-based, qualitative risk assessment for different asset types in a network (data from a large EU organisation in the financial sector), and a quantitative approach. Size of bubbles is proportional to number of assets in that category. The mismatch is clear, both in the scale of the prediction, and in the relative ordering of asset criticality. For example, according to the internal assessors MAIL, SHARE, and AUTHentication services represent the highest risk, while the quantitative method assigns those to amongst the lowest in the relative ordering. The important aspect to consider here is that the quantitative method is a white-box one, whereas the qualitative approach is a black-box and cannot be reproduced (as a different set of experts will likely come up with a different assessment (Cox RISA 2008)).

shows the disparity between a traditional, matrix-based, qualitative risk assessment for different asset types in a network (data from a large EU organisation in the financial sector), and a quantitative approach. Size of bubbles is proportional to number of assets in that category. The mismatch is clear, both in the scale of the prediction, and in the relative ordering of asset criticality. For example, according to the internal assessors MAIL, SHARE, and AUTHentication services represent the highest risk, while the quantitative method assigns those to amongst the lowest in the relative ordering. The important aspect to consider here is that the quantitative method is a white-box one, whereas the qualitative approach is a black-box and cannot be reproduced (as a different set of experts will likely come up with a different assessment (Cox RISA 2008)).

The creation of objective risk estimation methods for risk assessment remains an open challenge, to adapt existent risk metrics (like CVSS) to operating organisation environments without often overwhelming implementation costs.

References

- Luca Allodi and Fabio Massacci (2017), Security Events and Vulnerability Data for Cybersecurity Risk Estimation. Risk Analysis, 37: 1606–1627. doi:10.1111/risa.12864 PDF

- Luca Allodi, Fabio Massacci. Comparing vulnerability severity and exploits using case-control studies. ACM Transactions on Information and System Security (TISSEC). 17, 1, Article 1 (August 2014), 20 pages. PDF

- Luca Allodi. (2015, March). The heavy tails of vulnerability exploitation. In International Symposium on Engineering Secure Software and Systems (pp. 133-148). Springer, Cham. PDF

- Luca Allodi. Attacker economics for Internet-scale vulnerability risk assessment (Extended Abstract) Research proposal, in Proceedings of Usenix LEET 2013. PDF

- Luca Allodi, Fabio Massacci. How CVSS is DOSsing your patching policy (and wasting your money). Presentation at BlackHat USA 2013. Slides

- Luca Allodi, Woohyun Shim, Fabio Massacci. Quantitative assessment of risk reduction with cybercrime black market monitoring. Proceedings of IEEE S&P 2013 International Workshop on Cyber Crime. PDF

- Luca Allodi, Fabio Massacci. A Preliminary Analysis of Vulnerability Scores for Attacks in Wild. Proceedings of BADGERS 2012 CCS Workshop. PDF